Drilling into the notes on these papers, the work of Charles Taylor on values jumped out at me. I’m currently rereading Taylor’s Sources of the Self. In many ways, current questions around AI operator intent replicate issues that surfaced with the advent of utilitarianism in the high Enlightenment 2.5 centuries ago. Namely, why would maximizing personal utility maximize for larger social goods as well ? It’s not at all self-evident that this should be the case. Keen as the early utilitarians were to distance themselves from religion, metaphysics, or any sort of ontological values hierarchy, pure utility - based on personal pleasure/pain calculus - did not convincingly provide an account of why such mechanisms would promote a more generalized good. So even the early utilitarians smuggled in what Taylor calls other moral sources, which sources in many cases echoed prior ancient or Christian thinking on the nature of the good. Current efforts to create moral maximization functions for AI do not strike me has having untangled this specific knot or resolved the underlying difficulties especially better.

Because the Taylor article from 1977 and the book from 1995 cited here were not readily available to me online, I pasted an AI summary of Taylor’s thinking below, to provide context for anyone here who wishes more background on the social theory behind “strong evaluation”.

Quoted Summary:

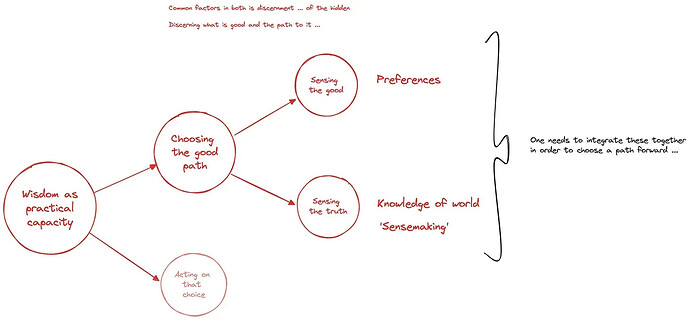

For Charles Taylor, human agency is characterized by being able to make qualitative distinctions about life, the ability to evaluate one’s own feelings and desires, and the capacity to define one’s identity and life within a shared moral framework that arises from dialogue with others. It involves a self-interpretation of actions, emotions, and preferences, and is deeply intertwined with one’s sense of morality, spirituality, and the meaning of life, distinguishing humans from other agents.

Here’s a breakdown of Taylor’s view on human agency:

Taylor argues that human agents are distinguished by their ability to make “strong evaluations,” meaning they can distinguish between different kinds of goods and make qualitative judgments about what is valuable or good in life. This involves understanding not just what one wants, but also what is truly worthwhile.

The Role of Identity:

Human agency is intimately tied to the concept of the self and identity. Our identity is shaped by our commitments and our “moral framework,” which provides the horizon within which we understand ourselves and our place in the world.

Dialogue and the “We”:

Taylor emphasizes that identity and self-understanding are not formed in isolation. They are developed through “dialogue”—both overt and internal—with others. This includes social interactions that provide the language and concepts to define ourselves.

Self-Interpretation:

Humans interpret their own emotions, feelings, preferences, and actions. This self-interpretation is a key aspect of being a responsible agent, as it shapes how one understands one’s own life and responses.

A Moral Horizon:

For Taylor, human agency operates within a moral framework that provides “strong qualitative distinctions”. This framework allows for questions about good and bad, meaningful and trivial pursuits, which are essential for defining one’s identity and taking a stand in the world.